Google Search Essentials are critical guidelines that brands and businesses must follow to appear and perform well on search results. These guidelines increase the chances of your web-based content being visible to users. No matter whether you are a business, blogger, content creator, or a startup, enhance your search engine ranking by meeting these requirements:

- Technical Requirement: A few technical implementations that Google needs websites to work on.

- Spam Policies: Manipulative practices that website owners should avoid.

- Key Best Practices: Steps to improve Google Search appearance

There are multiple rules that brands and businesses must consider when chasing the #1 Position in search results for competitive search terms. But by meeting these core guidelines, you optimize the technical areas, avoid violating spam policies, and ensure the content is for users.

Note: Just because you met all these guideline requirements, it doesn’t mean Google will crawl, index, and serve all your content. Before jumping to any conclusion, understand how search works.

Technical Requirement

Building a website is only the first step toward establishing a digital presence. Ensuring it meets technical requirements is essential for making your web pages eligible for visibility in search results. If your website fulfills these key requirements, remember it is eligible for indexing in search results:

1. Googlebot isn’t blocked:

Imagine your office has two entrance gates on opposite sides. If one gate is closed, visitors cannot enter through that route and may not be able to access your services at all. Similarly, in SEO, if you block Googlebot, it cannot access your website’s pages. As a result, it cannot crawl your content, which means your pages may not appear in search results.

If you want your web page to be found and accessible, make sure to check that important crawlers are not blocked in your robots.txt file. Also, remember that Google only indexes pages that the public can access. If you have created any page that requires a login to view information, it is considered a private page, and Googlebot will not crawl it.

If you have Search Console set up already, you can review a list of pages that are inaccessible or blocked via robots.txt using the Page Indexing report, Crawl Stats report, or test via URL Inspection Tool in Search Console.

2. Google receives an HTTP 200 (success) status code

HTTP 200 (success) status code is a web server response code that shows the client request has been received and fulfilled successfully. In short, it implies that the page works. You can check your web page status code via third-party tools like HTTP Status Code Checker or URL Inspection Tool in Search Console.

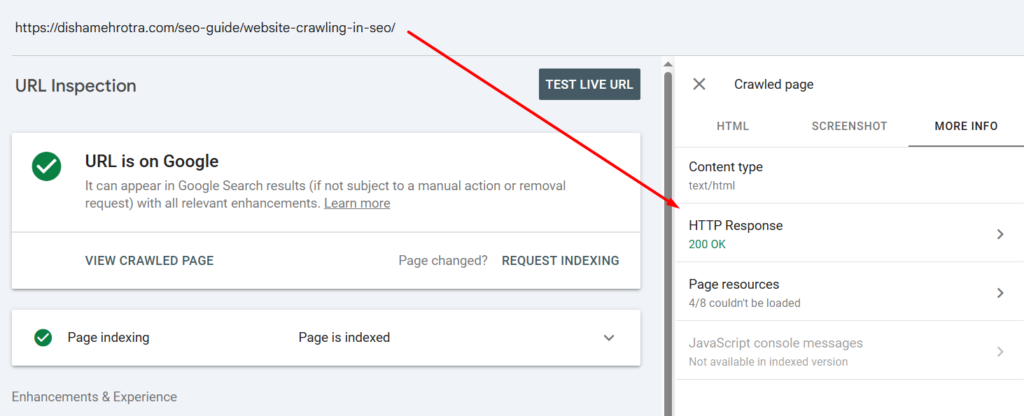

If you want to check via URL Inspection Tool in Search Console, follow these simple steps:

- Go to your Search Console Account

- Submit URL in the URL Inspection Tool

- A status update will be shared on your screen, click on “View Crawled Page.”

- A side panel will open, and go to the “More Info” section.

- You can now easily check the HTTP Response of your web page.

Remember, Client and server error pages often face indexing issues. Client error pages (4xx Series) generally occur when the request sent could not be fulfilled. Server error pages (5xx Series), on the other hand, occur when the server fails to complete the request due to some internal error.

3. The webpage has indexable content

Once you fulfil the above two technical requirements, i.e, unblocked crawlers from accessing your web page and ensured your page shows an HTTP 200 (success) status code, you have opened the gates for crawlers to check whether the page has indexable content.

Key rules for indexable content are:

- It must have a file type that Google Search supports.

- There is no violation of spam policies in the content.

Spam Policies

To rank in search results, several companies adopt black hat SEO practices and manipulative methods. Google doesn’t support spammy methods and has created spam policies that websites must adhere to, or pages will start noticing a drop in ranking and face complete omission from search results.

If you are an SEO beginner or a brand that has recently got his/her website live, make sure you are not following manipulative practices.

- Cloaking: Showing different content to users and search engines to trick rankings.

- Doorway abuse: Creating multiple similar pages just to rank for keywords and redirect users elsewhere.

- Expired domain abuse: Buying old domains with authority and filling them with unrelated or low-value content to rank faster.

- Hacked content: Content added to your site without permission, often to spread spam or harmful links.

- Hidden text and link abuse: Hiding keywords or links on a page so users can’t see them, but search engines can.

- Keyword stuffing: Overloading a page with keywords unnaturally just to manipulate rankings.

- Link spam: Creating or buying links only to boost rankings instead of providing real value.

- Machine-generated traffic: Using bots or automated tools to send fake traffic or queries to Google.

- Malicious practices: Hosting harmful software or deceptive content that can damage users’ devices or data.

- Misleading functionality: Promising a feature or tool but actually showing ads or something unrelated instead.

- Scaled content abuse: Creating large amounts of low-quality or unoriginal content just to rank in search.

- Scraping: Copying content from other websites and publishing it without adding any real value.

- Site reputation abuse: Publishing third-party content on a strong site just to take advantage of its ranking power.

- Sneaky redirects: Sending users to a different page than expected, often without their knowledge.

- Thin affiliation: Using affiliate pages with copied product info and no original insights or value.

- User-generated spam: Spam content posted by users in comments, forums, or profiles without proper moderation.

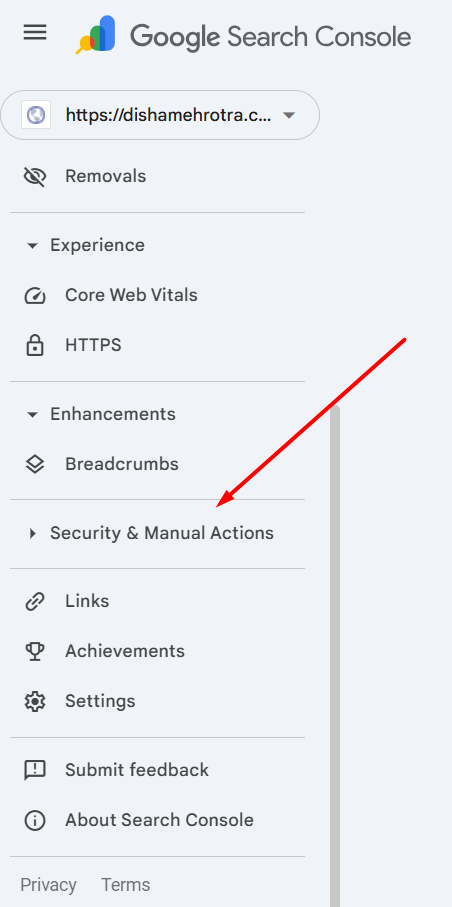

Google uses automated systems to detect policy violations. You can even check if your website has received any complaints or policy violation updates via the Manual action report in Search Console.

Key Best Practices

Once you have checked the technical aspects and spam policy updates, as an SEO expert, the next step should be to follow core practices to improve the site’s SEO. Some of the best practices that can impact your Google search appearance include:

1. Reliable and Helpful Content for your Audience

Some people create content focusing on search engines and miss out the key point, i.e., people or the target audience. Google has repeatedly stated that people-first content that addresses visitors’ queries and needs is considered reliable and helpful.

Ensure your content is comprehensive, easy to read, insightful, and demonstrates aspects of experience, expertise, authoritativeness, and trustworthiness (E-E-A-T), contributing to trust.

2. Keyword Optimization

Your web page content must meaningfully use search terms or keywords. While telling people about your product, service, or blog topics, make sure you are placing those terms in the content that people are likely to use when searching online.

Apart from adding it to the content, strategically implement it in headings, alt text, and link text. This is another key practice that explains to Google about the topic and context. Do not overstuff keywords, or it may sound promotional and violate spam policy.

3. Crawlable Links

While reading a blog or service page, you would have probably come across terms that are new to you. In such cases, it becomes much easier to understand those terms when they’re explained in detail on another page. But how do you reach that detailed explanation? Usually, it’s through internal links within the content.

Another key practice to help crawlers discover content on your website and understand site structure/relation is linking to relevant pages. Further, it increases engagement rate.

4. Be Active in Communities

You optimized your website, and now people know what you sell or offer. But to keep them updated about new offers or services, it is equally important to stay active in communities and engage on different platforms.

5. Best Practice for all Content Type

Text is not the only element used when building a website. Developers also use images, videos, structured data, and JavaScript. You might be surprised to know that each of these elements has its own best practices that should be followed so crawlers can understand them properly.

6. Enable Features to Improve your Site Appearance

The design of the Google Search results page evolves over time as new features are introduced to improve user experience. Apart from text elements, there are several visual elements (rich result, image result, video result, exploration features, attribution elements) that users can interact with. Where relevant, try to enable these features on your website to enhance visibility and engagement.

7. Control what you share with Google

There can be cases when you don’t want a specific page to be displayed in search results. Instead, it is only for users who visit your website and discover it. In such a case, you can control what you want to share with Google by blocking it.

These are some of the essential guidelines that brands and businesses must follow or keep in mind. This is not complete SEO. To understand how each element and process works, first understand how search works.

Frequently Asked Questions

What are Google search essentials?

Google Search Essentials are a set of guidelines that help businesses and brands optimize their website and make them eligible for visibility in search results. These guidelines focus on working on technical requirements, spam policies, and best practices.

Does meeting Google Search Essentials guarantee rankings?

No. If you think following these guidelines will get you the first position on search results, the answer is a complete no. Even after following each rule, there can be cases where Google will not crawl, index, or rank your page. Your visibility or ranking completely depends on quality, relevance, and other factors.

Why are spam policies important?

Spam policies define harmful or manipulative practices that can lead to lower rankings or even removal from search results. Following them helps maintain trust and visibility.